Myo Gesture: External Testing v01 Results and Plans for v02

This week I began testing Myo armband’s gesture on other people. I wanted to start small because there was a chance that I could successfully get through the tasks I created because, well, I created them. And this is exactly what I found. Participant struggled because I used the Myo before and they have not. The testing is broken up into three sections: conventions questionnaire, task analysis on medium and large screens, and a follow-up questionnaire. I got to test informally (like play testing) with two people and formally (run through the test like I would with in a lab setting) with two more. What I found was the tasks need to be created in solely canvas and cannot rely on the third party mouse application. The main reason for this the Myo armband locks automatically after a few seconds. The participants were getting confused on why they had to keep unlocking the Myo to control the mouse. And during one run through, the mouse controller just crashed and broke completely. And since it is a mouse controller, there is a significant lag. I need to redesign and remake the tutorial and task pages to make up for this. The tasks themselves are fine and give me the data I need to analyze, but the participants are struggling even more so far.

##Findings

Even though there was some trouble with the tests, there still were task that we obviously easier than other. Of course I am going to be redoing these tasks, over and over again, but my predictions are so far correct.

###Conventions

I have been reading the book called Brave NUI World which focuses touch and gesture interaction. During one section the authors Wigdor and Wixon talk about in-air gestures; the kind we are testing here. The described these gestures as “touching from afar.” One of my predictions was that touch conventions would spill into gesture commands and inform them. And so far I am seeing just that. A lot of the commands that people are doing for my questionnaire, mirror what is currently acceptable as touch commands on smartphones and tablets. The best example of this is zooming.

In the video above we can see a participant demonstrating how they think zooming in and out would work with gesture commands. Remember I have the participant do these exercises before they even use the Myo (so the do not become biased).

Later, the same participant ended up just resting their hand on the table and used the table’s surface much like an touch screen surface. Almost like an invisible trackpad. Above you can see the participant demonstrating what panning left or right may look like.

Other gestures seemed to be like more elaborated versions of their touch counterpart. Here we can see the participant demonstrating going back and forward through pages or tabs. These wrist flicks are similar to how this command would be accomplished on a touch screen. The difference is that gesture is using the whole hand and wrist instead of just the index finger.

Some gestures were harder to for the participants to come up with examples. Participants struggled the most with clicking and the idea of entering in text.

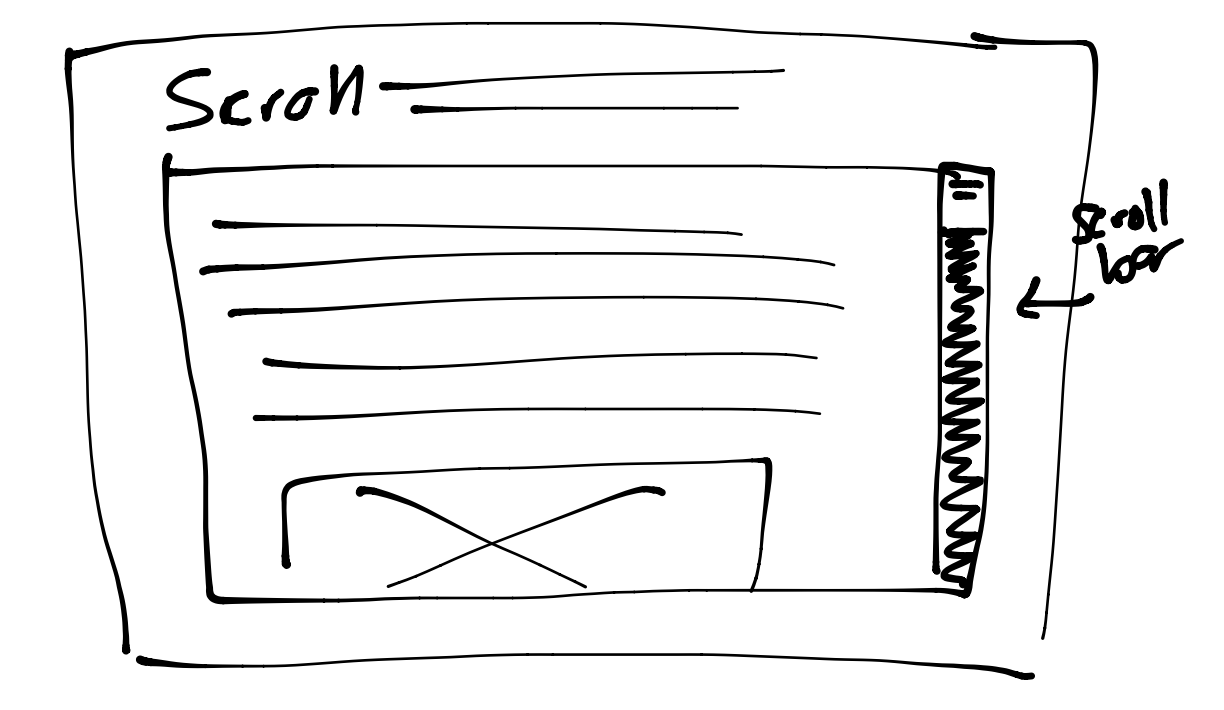

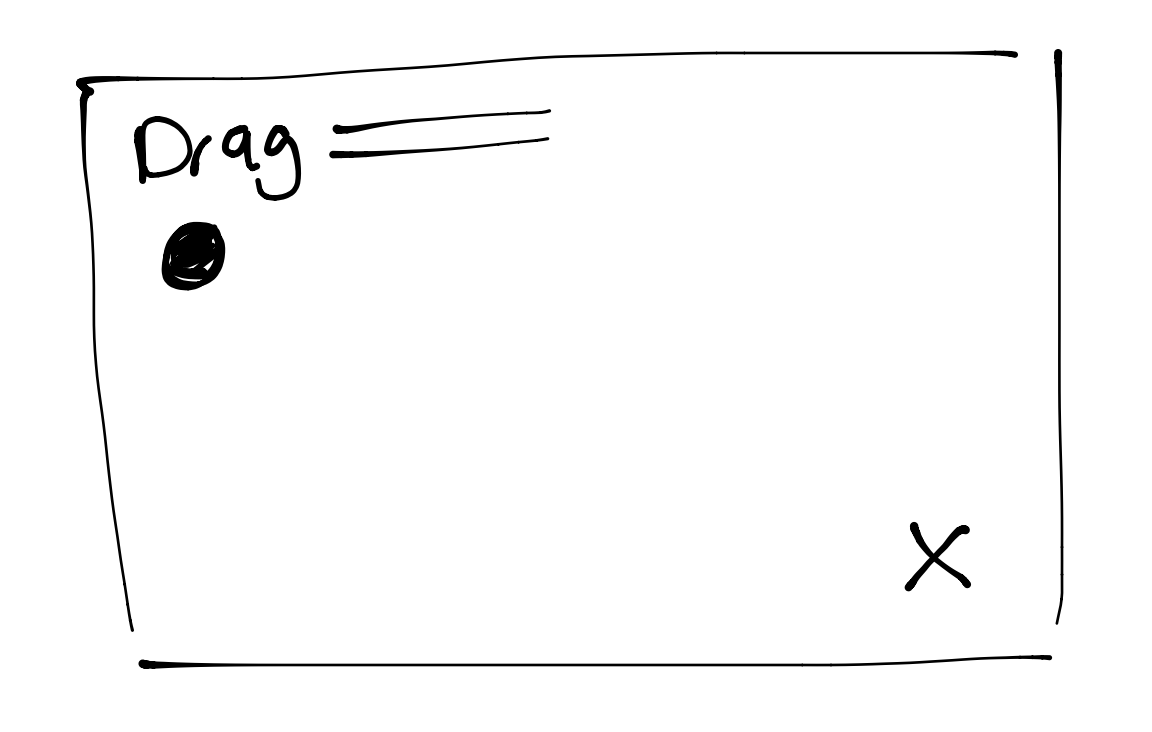

###Tasks Dragging is the easiest task. As predicted, dragging was the easiest tasks for the participants this week. The participants had the most success and least failed attempts with the scrolling and dragging tasks. I also saw that the participant perform the same on both screen sizes.

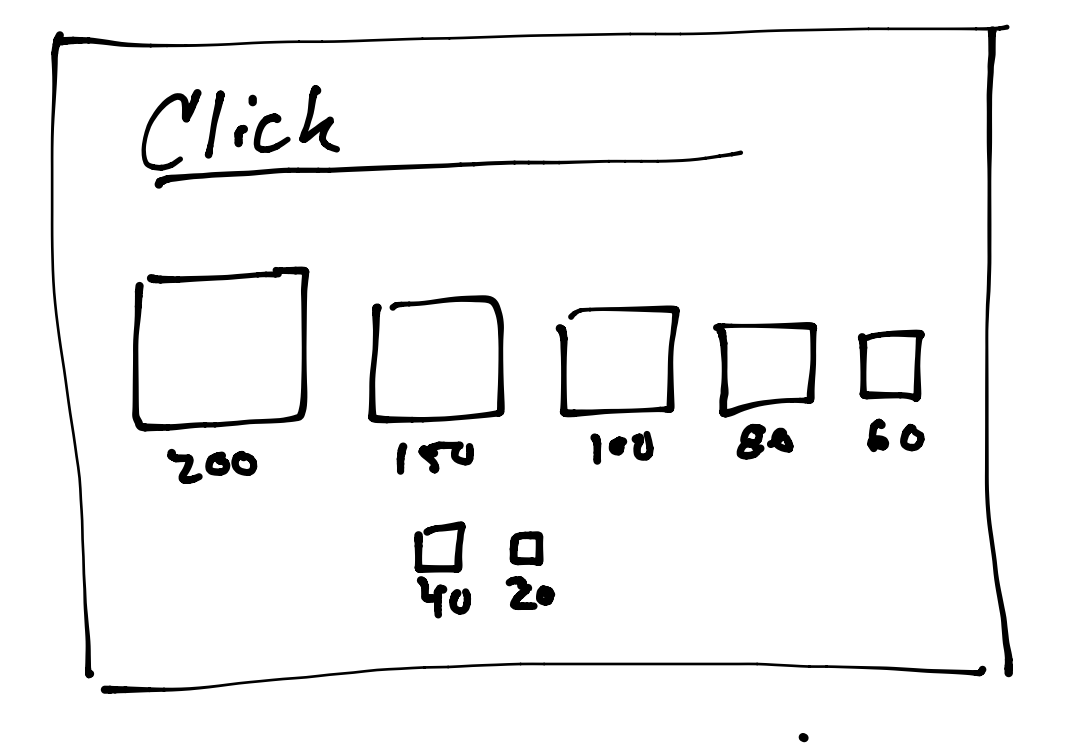

Buttons larger than 60px are easiest to hit. Again, this was confirmed with the testing I accomplished this week. The 40px and 20px button was successfully hit in two tests, but they needed on average 3 or more attempts.

Participants perform better on a larger screen. This was not what I predicted last week. It seemed that this week participants ran through the tasks faster. I know this is probably partly because they never used the Myo before and had gotten the hang of it. I may need to test on three groups than. One with the mouse on desktop, one with Myo on desktop, one with Myo on projector.

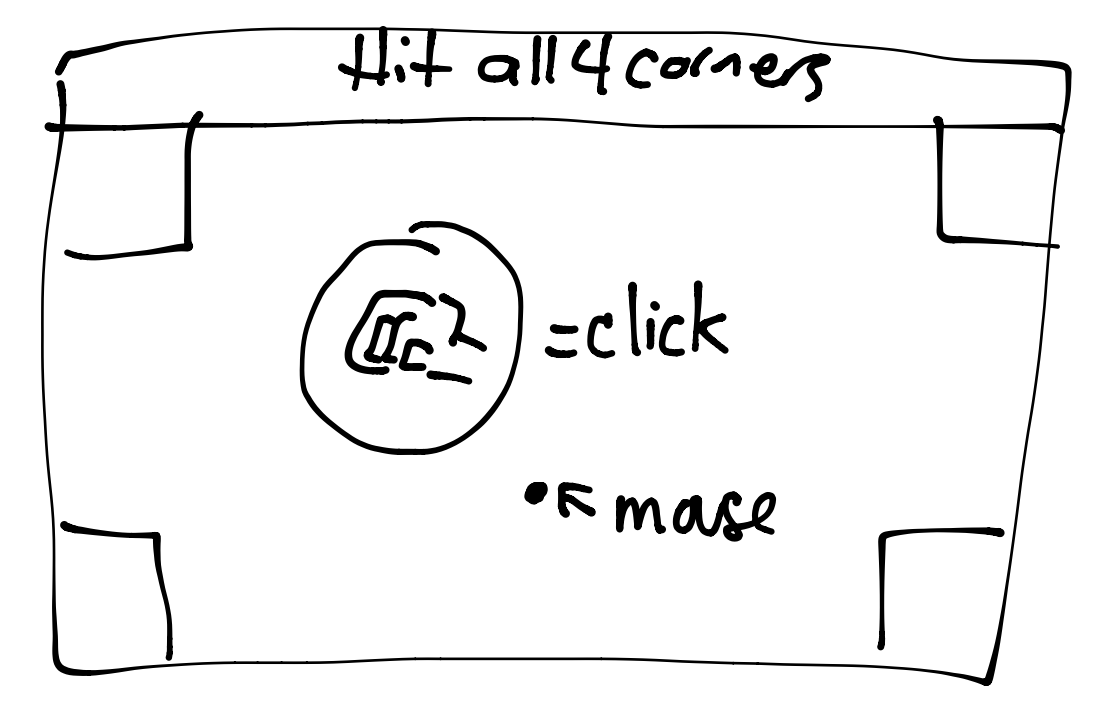

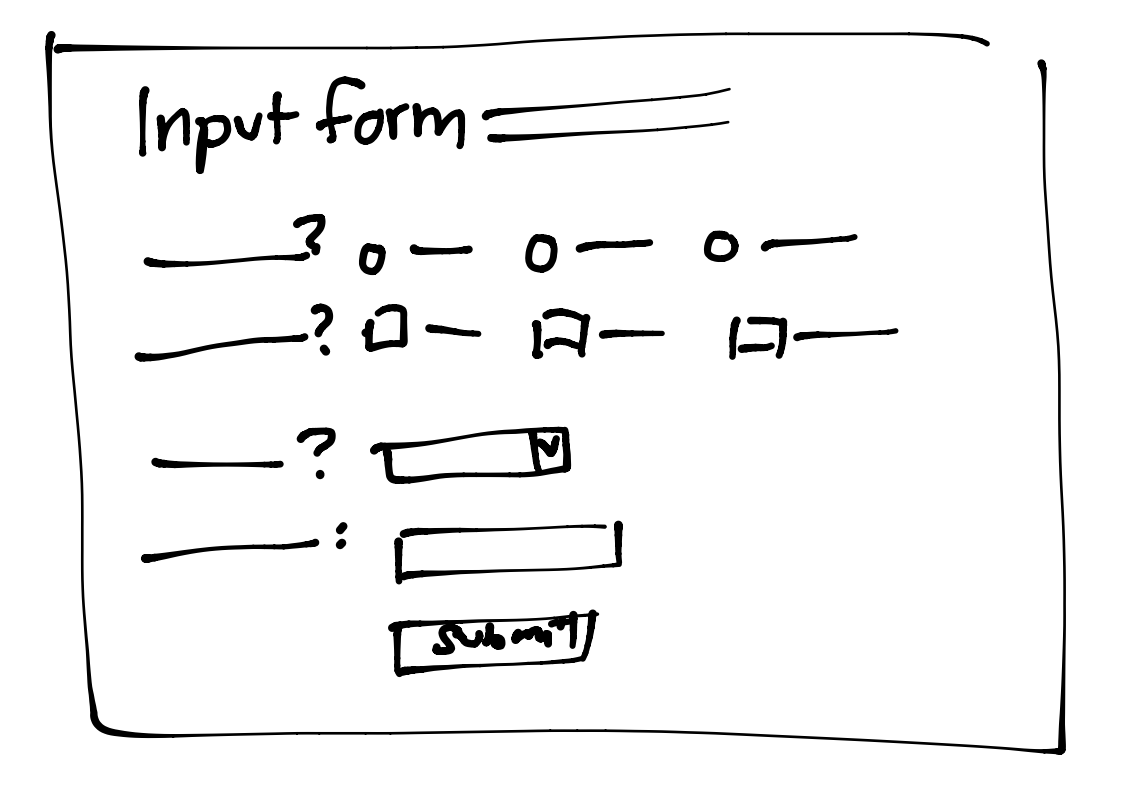

##Tutorial Changes The tutorial definitely needs to be tweaked for future testing. Instead of having one page for all the tasks, I am going to break them out into their own page for each. I am also going to make my own mouse controller in the browser to make things easiest for the users. You can see sketches of this new tutorial and task set up below (they are very rough).